A beginning does not explain itself. If the universe truly began—if time, space, matter, and energy came into being—then the obvious question is not merely how it developed, but what could account for physical reality arriving when no earlier physical state existed to produce it. Twentieth-century cosmology sharpened that problem by tracing expansion back toward an apparent origin, making a transcendent first cause seem less like a relic and more like a live philosophical option: the explanation would be something foundational to the physical order, not one more ingredient within it.

(*The following is an excerpt from “Does the Universe Paint God Out of the Picture?” by Luke Baxendale. This is part three of four in the book. It may be helpful to read part two first.)

Yet the story is not settled. With the rise of quantum cosmology, an ambitious attempt to unite quantum mechanics and general relativity, many naturalists saw fresh hope that the universe might in some sense be self-explaining. Perhaps quantum laws could do more than describe how the cosmos behaves. Perhaps they could illuminate why there is a cosmos at all. On that telling, God would not be refuted so much as rendered unnecessary, and Stephen Hawking believed quantum cosmology pointed in exactly that direction.

The stakes are high. If quantum cosmology can genuinely explain why there’s something rather than nothing, it would represent one of the most profound scientific achievements in human history. So does it deliver? Can quantum processes really account for existence itself?

To answer that, we need to wade into genuinely strange territory. Quantum cosmology is notoriously challenging—even physicists describe it as baffling. But that strangeness is precisely what makes it fascinating, and understanding the basics is more accessible than you might think.

Stephen Hawking and Quantum Cosmology

Stephen Hawking is widely treated as a major figure in quantum cosmology. As an English theoretical physicist and cosmologist, he defied extraordinary odds to become one of history’s most influential scientists. Over decades, a rare early-onset form of motor neurone disease gradually paralysed him, yet this mathematical prodigy produced groundbreaking work on the universe’s origin and structure—from the Big Bang to black holes—that revolutionised the field.

Although Hawking had played a crucial role in proving the singularity theorems (through landmark work with Roger Penrose in 1970 and the 1973 monograph “The Large Scale Structure of Space-Time” with George Ellis), Hawking found their implications of a beginning philosophically unsettling. What troubled Hawking was that if the universe began at a singular point where physics itself broke down, how could science ever hope to explain the ultimate origin of everything? A beginning of this kind seemed to place the universe’s creation beyond the reach of scientific inquiry. As a result, Hawking began to formulate a cosmological model that he hoped would eliminate this troublesome initial boundary condition.

So, in 1981, Hawking gathered with some of the world’s leading cosmologists at the Pontifical Academy of Sciences, a vestige of the coupled lineages of science and theology located in a grand villa in the gardens of the Vatican. There, Hawking presented a revolutionary idea: a self-contained universe, with no definitive beginning.

But how can something emerge without beginning? Hawking’s answer required reimagining time itself. He proposed that near what might be considered the beginning of the universe, time wouldn’t behave like time at all, but it would behave like a spatial dimension. In this framework, the universe becomes self-contained and boundaryless. There’s no distinct starting point, no moment of creation requiring an external cause. The universe simply is, complete unto itself.

This bold proposal hinged on applying quantum mechanics to the universe at its nascent stage. Quantum mechanics (which I’ll refer to as QM) is the study of how the world operates at very small scales, where reality can become quite strange. QM describes the interactions and motions of subatomic particles that exhibit both wave-like and particle-like behaviour.

While the universe today is vast and expansive, at some point in the finite past it was so incredibly small that quantum mechanical effects influenced gravity in fundamentally different ways. Many physicists have proposed that in such extreme conditions, gravitational attraction played by quantum rules—subject to unpredictable fluctuations, with Einstein’s theory of gravity (general relativity) no longer holding up.

Although no adequate theory of “quantum gravity” has yet successfully merged general relativity with QM, Hawking applied speculative QM ideas about subatomic-scale gravity to describe the universe’s earliest state. Working with James Hartle, he developed a quantum cosmological model called the “no-boundary proposal,” which was fully formulated in a 1983 paper.[i]

Understanding this proposal requires grasping a genuinely strange phenomenon at the heart of QM: wave-particle duality. The mystery began in 1801 when Thomas Young shone light through two narrow slits and watched it create rippling interference patterns on a screen—clear proof that light behaves like a wave. Then in 1905, Einsteins work on the photoelectric effect revealed that light also comes in discrete little packets of energy called photons. So, which is it: wave or particle? Surely light can’t be both?

The 1920s then brought even stranger revelations. Scientists discovered that electrons, atoms, and other subatomic particles also show this dual nature. But these particles aren’t simply “switching” between being waves or particles. Instead, this suggested something more fundamental about quantum systems, that we observe about their nature depends on how we choose to measure them.

Physicists in the 1920s and 1930s sought to explain or at least accurately describe these strange results. Erwin Schrödinger developed a mathematical framework to characterise wave-particle duality, creating what physicists call a wave function. Think of it as describing a ghostly cloud of possibilities that exists until the moment we measure it. The wave function doesn’t tell us what the particle is before we look—only what we might find when we do look. When the photon, as a wave, encounters an observer or detector, this cloud of possibilities “collapses” into a single definite outcome.

The wave function also describes “superposition,” but this doesn’t mean the particle is literally “in multiple places at once” in any classical sense. Rather, it means the system genuinely lacks definite properties until measured. When a measurement occurs, we observe definite outcomes, and the wave function appears to “collapse” to reflect this new information. However, whether this collapse represents a physical process or simply an update of our knowledge is debated (I lean toward the latter).

It’s also important to clarify that the term “observed” in this context does not necessarily imply that a conscious observer is needed for the wave function to collapse. The “collapse” can also occur through interaction with the environment, as described by decoherence theory, which explains how quantum systems lose their coherent superposition properties through unavoidable interactions with their environment.

Admittedly, it’s all rather perplexing. The notion that a subatomic particle exists without a definite character, represented as a mathematical probability until it interacts or is observed, challenges both physicists and common sense alike. I suspect that much of this “weirdness” arises from our tendency to impose classical mental pictures on quantum phenomena. Our everyday intuitions about reality are, in many cases, simply the wrong tools for understanding the quantum world.

This quantum strangeness, however bizarre, becomes crucial for cosmology. In the first fractions of a second after the Big Bang, the universe would have been so small that QM would have governed how gravity functioned. To describe gravity in this extreme environment, scientists crafted the Wheeler-DeWitt equation—named after John Wheeler and Bryce DeWitt. Many physicists consider it, at the very least, an initial step towards a quantum theory of gravity, representing an effort to unify general relativity and QM within an approach called “quantum geometrodynamics.” It describes the quantum state of the universe without any explicit time dependence. This absence of time is a peculiar feature that gives rise to what physicists call the “problem of time” in quantum gravity.

Here’s where the conceptual leap occurs. In standard QM, the wave function describes probabilities for particles or fields, where an electron might be found, for instance. But in quantum cosmology, the focus shifts to the universe as a whole. Solving the Wheeler-DeWitt equation enables physicists to formulate a wave function for the entire universe, describing a range of potential universes, each with distinct gravitational fields and mass-energy configurations. In other words, this universal wave function outlines the various spatial geometries that a universe could assume, revealing the probability of a universe emerging with specific gravitational and physical properties.

So, to understand how quantum cosmology could be used as a theory that explains the existence of the universe, it’s crucial to focus on three key elements:

- The existence of our universe with its unique attributes—the phenomenon that needs to be explained.

- The universal wave function—the mathematical construct that provides the explanation.

- The Wheeler-DeWitt equation and the mathematical process for solving it—the justification for using the universal wave function as an explanation for the universe.

Stephen Hawking’s primary goal was to determine this wave function—a mathematical expression that encapsulates all possible states of the universe. By solving the Wheeler-DeWitt equation with a specific “no-boundary” condition, Hawking and Hartle calculated the relative probabilities of different universes emerging, including one like ours. If the probability for our universe is non-zero, Hawking argued, this could provide a fundamental explanation for the universe’s existence grounded in the laws of quantum physics.

Together, they proposed the “wave function of the universe,” a timeless quantum description of the whole of spacetime. Using a path integral over compact, boundaryless Euclidean geometries, their no-boundary approach suggested that spacetime could emerge smoothly from a quantum state without an initial singularity. In this framework, the universal wave function can be understood as a solution of the Wheeler–DeWitt equation for a self-contained universe like ours.

The implications ripple outward from the mathematics into theology itself. If the universe requires no beginning, it requires no beginner. Hawking had travelled to the Vatican to present cosmology. He left having presented cosmologists with a challenge to the very notion of creation that the institution surrounding them had proclaimed for two millennia.

However, whether his mathematical formalism truly captures physical reality remains intensely debated, as there were a few complications along the way…

The Limitations of Imaginary Time in Explaining the Universe

Hawking realised that accurately calculating the early universe’s likely state was intractable within the framework of real time. To make the problem tractable, he reframed the calculation in “imaginary time”, which is a mathematical trick that transforms how we describe the universe’s birth.

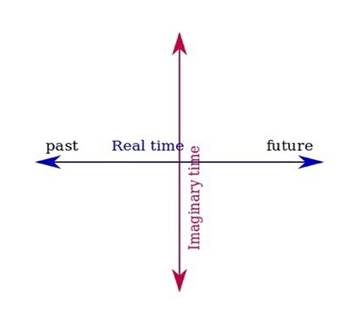

Think of imaginary time as taking our familiar concept of time and multiplying it by ‘i’, the mathematical symbol for the square root of negative one. This might sound absurd, but it’s a standard technique physicists call a Wick rotation: a 90° turn of the time axis in the complex plane. Real time runs past → present → future like an arrow. Imaginary time sits at right angles to that arrow, as if adding a second direction for time in the mathematics. This isn’t a claim that we literally experience a different kind of time, it’s just a computational device that makes otherwise impossible calculations manageable. Here’s a diagram to visualise the idea:

Why does this help? Normally, Einstein’s spacetime has what physicists call a “Lorentzian signature”, meaning time behaves fundamentally differently from the three dimensions of space. When we switch to imaginary time, spacetime becomes “Euclidean”—time starts acting just like another spatial dimension. The abrupt “beginning” that causes mathematical breakdowns in real-time formulations gets smoothed out.

This reimagining led to Hawking’s famous “no boundary” proposal. He envisioned the early universe as having a rounded geometry, much like the curved surface of the Earth. In this analogy, the South Pole represents the “beginning” of the universe, and the circles of latitude represent the passage of time. Just as it’s meaningless to ask what lies south of the South Pole, it becomes nonsensical to ask what occurred “before” the rounded-off section of spacetime in Hawking’s model. The universe has no boundary, no singular first instant, yet the past remains finite.

Hawking understood the theological implications in this work. In ‘A Brief History of Time,’ Hawking presented this result as a challenge to the idea that the universe had a definite beginning in time, and after he explained how this “calculational aid” eliminated the singularity, he famously observed:

“So long as the universe had a beginning, we would suppose it had a creator. But if the universe is really completely self-contained, having no boundary or edge, it would have neither beginning nor end; it would simply be. What place, then, for a creator?”[ii]

Hawking’s proposal sparked widespread belief that he had dismantled the Kalam cosmological argument for God’s existence, and by the 1990s, Hawking single-handedly began to shift perceptions about the Big Bang Theory’s implications.

Nevertheless, Hawking’s approach relied on a clever but controversial mathematical move: replacing real time with “imaginary time.” The crux of the problem wasn’t his mathematics, it was his interpretation of what those equations meant.

When time is confined to the imaginary axis of the complex plane, it remains unclear what physical significance, if any, this carries. Our understanding of time is rooted in real, observable experience—the tick of clocks, the flow of causation, the arrow from past to future. Imaginary time bears no resemblance to this. As a computational tool, it makes the equations tractable, but it becomes conceptually unintelligible as a description of our reality. Yet Hawking spoke of his mathematical expressions as if they carried genuine physical significance, despite incorporating this fundamentally unphysical concept.

Hawking himself acknowledged this limitation. He acknowledged that once his mathematical depiction of the geometry of space is transformed back into the real domain with a real-time variable (the domain of mathematics that applies to our universe), the singularity reappears. In his own words:

“When one goes back to the real time in which we live, however, there will still appear to be singularities… Only if we lived in imaginary time would we encounter no singularities… In real time, the universe has a beginning and an end at singularities that form a boundary to spacetime and at which the laws of science break down.”[iii]

The “Sum Over Histories” That Still Needs a Stage

Another thorny issue in the Hartle–Hawking no-boundary proposal appears when you dig into the tool it relies on: the path integral, Feynman’s “sum over histories” method. In ordinary QM, the path integral doesn’t picture a particle as calmly selecting one neat route from A to B; instead it calculates outcomes by adding up contributions from all conceivable routes, including the weird and counterintuitive ones.

To make that intuitive, imagine you’re walking from your house to the local shops. Common sense says you take the most direct path. But the quantum calculation acts as if the particle “tries out” every possible itinerary: the straight line, sure, but also long detours, loops, and roundabout wanderings that look absurd from a human point of view. What matters is not that the particle literally takes a scenic tour, but that the mathematics includes every allowed alternative and combines them into the final probability for what you observe.

Hartle and Hawking scale up that same idea to cosmology. Instead of summing over the possible paths of an electron, they sum over possible cosmic histories: different spacetime geometries and matter configurations the universe might have had. In this approach they define a “wave function of the universe” over an abstract space of possibilities often called superspace (a conceptual arena representing possible 3-geometries and associated matter fields). The output is not just a story about what happened. It is a framework that assigns relative weight, often described in popular terms as likelihood, to different kinds of universe configurations, including the emergence of a universe broadly like ours.

This is also where their famous “no-boundary” move enters. By allowing a particular class of geometries (often discussed using complex or “imaginary” time techniques in expositions), the model aims to replace a hard-edged beginning with something smoother: a universe that can be finite yet boundaryless, avoiding the classical picture of a singular origin where the equations blow up. In that sense, it doesn’t so much push back the beginning as it changes the shape of what “the beginning” looks like in the mathematics.

But here’s where the model blinks. To even start writing down this cosmic path integral, you must already have a structured framework in place: quantum rules, a definition of the configuration space you’re summing over, a prescription for the wave function, and a specific boundary proposal that tells you which histories count. In other words: the proposal begins only after you assume the existence of a very specific kind of mathematical and physical arena in which “summing over histories” is meaningful. Those ingredients aren’t produced by the model; they’re assumed as the preconditions that make the model runnable. So while Hartle–Hawking can remove a temporal boundary in classical spacetime, it risks installing a conceptual boundary instead: the quantum formalism itself becomes the unexplained starting point.

That shift matters because it changes what the proposal is really explaining. If the goal is to tame a classical singularity, smoothing the “edge” of spacetime is a meaningful achievement. But if the goal is to explain existence “from nothing,” then a model that starts by presupposing a specific quantum framework, including path integrals, superspace, wave-function dynamics, and boundary rules, has not eliminated the origin question so much as relocated it. So, the puzzle returns in a new form: why these quantum preconditions, why this rulebook, and why should that be treated as explanatory bedrock rather than as another layer that itself calls for explanation? Hartle–Hawking may give you a universe with no edge in time, but it still relies on an edge in explanation.

Whether that counts as a decisive objection depends on what you think cosmology is allowed to take as given. For some readers, “the laws are just there” is a reasonable stopping point; for others, it’s precisely the point where the real mystery begins. Either way, the tension is real: the no-boundary proposal may be mathematically elegant, but the hard question remains whether it truly accounts for origins or simply shows that, once a particular quantum framework is assumed, a universe like ours could be among the likely outcomes.

Constraints on Mathematical Freedom: The Role of Information in Quantum Cosmological Models

A third issue with the Hartle-Hawking model concerns its need for specific constraints. This isn’t just a technical detail; it cuts to the heart of what we mean by “explaining” the universe.

Here’s the crux. To extract a “wave function of the universe” from the Wheeler–DeWitt equation, physicists face an overwhelming landscape of possible solutions. Hartle and Hawking’s path-integral strategy is often presented as “summing over all possible spacetime geometries,” but in practice the calculation becomes tractable only by focusing on a narrow class of universes—ones broadly like ours: isotropic (uniform in every direction), closed (self-contained and curved), spatially homogeneous (uniform in composition), and possessing a positive cosmological constant.

That narrowing makes the mathematics doable, but it introduces a subtle circularity. The model’s boundary conditions look less like something derived from deeper principles and more like something selected because we already know what the universe is like. So, are we learning what the theory predicts, or recovering what we quietly built into the setup? These filters do not merely describe; they prescribe.

To handle the complexity, Hartle and Hawking lean on the mini-superspace approximation, thereby restricting attention to a small family of highly symmetric geometries and ignore the rest. Hartle himself is candid about this pragmatic move: “Every time when we do one of those calculations, we have to use very simple models in which lots of degrees of freedom are just eliminated. It’s called mini-superspace… it’s how we make our daily bread, so to speak.”[iv] That honesty is important, because it clarifies that the model’s power comes partly from what it sets aside.

The deeper issue is that the Wheeler–DeWitt equation naturally permits indefinitely many solutions, and a unique universal wave function does not fall out without additional boundary conditions. As Alexander Vilenkin puts it, in ordinary QM boundary conditions are fixed by an external experimental setup, but for the universe there is nothing outside to supply that constraint:

“In ordinary quantum mechanics, the boundary conditions for the wave function are determined by the physical setup external to the system under consideration. In quantum cosmology, there is nothing external to the universe, and a boundary condition should be added to the Wheeler-DeWitt equation.”[v]

That is why this isn’t merely a mathematical quibble. The Wheeler–DeWitt equation does not tell you which boundary conditions to choose, and no deeper theorem of gravity uniquely dictates them either. The selection is made by the theorist, who must restrict geometries and introduce targeted specifications to steer the mathematics toward one outcome among many.

Philosopher Stephen C. Meyer sharpens the point by focusing on agency: the constraints come from an intelligent agent acting with foresight and a goal. For instance, a computer program does not appear spontaneously from circuitry and physical law; it exists because a programmer supplies directives. By analogy, quantum cosmological models often reach “a universe like ours” only after scientists strategically limit mathematical possibilities so the equations can deliver that specific kind of result.

Once you see that, the question expands. It’s not only about the origin of matter, energy, or spacetime, but also about the origin of the information required to make these existence-describing equations yield this universe rather than an enormous range of alternatives. And since we routinely associate high specificity, precision, and foresight with mind in other contexts (think of software code, engineering specifications, strategic planning), it seems reasonable to ask whether something mind-like is implicated here as well.

Now, I’m not claiming this constitutes proof of God. But if our best mathematical accounts of cosmic origins cannot reach a determinate universe without information being strategically introduced by thinking agents (at least at the level of model construction), then perhaps mind is more fundamental to existence than strictly materialistic frameworks tend to allow. In that light, Hawking’s suggestion that something must “fire into the equations” the needed specificity echoes the older theological intuition that “in the beginning was the Word,” pointing to an origin marked by intent and meaning.

Vilenkin’s Quantum Tunnelling Proposal

In the same period that Hartle and Hawking were shaping their quantum-cosmology program, Alexander Vilenkin proposed a different origin scenario: the universe may have come into existence through quantum tunnelling. Framed in terms of a “tunnelling” wave function, his model is one of the main alternatives to the Hartle–Hawking no-boundary proposal. For many proponents, it offers a more quasi-mechanistic way to address why anything exists at all.

To understand Vilenkin’s proposal, we first need to grasp quantum tunnelling, which is one of nature’s strangest tricks. Picture a ball rolling toward a hill. In our everyday classical world, the rules are straightforward: if the ball doesn’t have enough energy to climb over, it stops or rolls back. The hill is an absolute barrier.

But zoom down to the quantum realm, and the rules change. Particles don’t behave like solid little balls; they’re more like fuzzy waves of probability. A particle approaching an energy barrier it “shouldn’t” be able to cross has a small but real probability of appearing on the other side, as if it tunnelled straight through the hill. It doesn’t climb over; it doesn’t smash through. But it does materialise on the other side. This isn’t science fiction. Quantum tunnelling is routinely observed in laboratories and is the principle behind technologies like tunnel diodes and scanning tunnelling microscopes.

Vilenkin’s audacious move was to scale this quantum weirdness up to the entire universe. Here’s how his model works: imagine the universe not as a physical object but as described by a mathematical wave function, essentially a probability distribution for all its possible states. This wave function obeys the Wheeler–DeWitt equation, which is quantum gravity’s analogue of the famous Schrödinger equation.

To make the problem tractable, Vilenkin uses a simplified “minisuperspace” model where the universe’s state is summarised by a single variable: its size. Think of this as plotting the universe’s radius on a graph. At the far left of this graph sits a point representing “zero size,” not just very small, but genuinely zero, where classical spacetime doesn’t exist at all. Moving to the right, you encounter a region representing tiny closed universes with definite (though minuscule) sizes.

Between these two regions (between nothing and something) sits an energy barrier. In classical physics, this barrier would be insurmountable. A universe with zero size would remain at zero size forever. But Vilenkin applies quantum rules: just as an electron can tunnel through a barrier, the universe’s wave function has a nonzero amplitude to tunnel through this cosmic barrier. On one “side” there’s no spacetime at all; on the other “side,” a tiny closed universe suddenly exists. And once this embryonic universe has tunnelled into existence with a definite (but tiny) size, it contains a scalar field that drives inflation.

In short, according to Vilenkin, the universe comes into being from a state of nothingness through quantum tunnelling. This process allows it to suddenly appear with a definite size, overcoming a classical barrier in a way that traditional physics can’t explain. Vilenkin’s theory contrasts with the Hartle-Hawking No Boundary proposal, which views time as finite but without a definite beginning.

The appeal is its mathematical neatness: it sketches a clear mechanism for getting a universe “off the ground” without presupposing an earlier spacetime. The conceptual pressure point, however, is the label Vilenkin uses for this event, creation “from nothing”, because the argument quickly turns on what “nothing” is supposed to mean in a quantum-cosmological setting.

In ordinary usage, “nothing” means the total absence of anything at all: no entities, no structure, no constraints, no laws. But quantum tunnelling is not a free-standing idea. It only has content inside a framework of quantum principles and equations: wave functions, probability amplitudes, and (in quantum cosmology) the Wheeler-DeWitt equation. But if space-time only appears with the universe, where do those rules “live”? Vilenkin’s model suggests that spacetime emerges after the tunnelling event, yet the tunnelling mechanism requires quantum principles to already be operating. So, if quantum laws and fields are already “there,” then something pre-universal exists: some kind of law-governed pre-geometric condition.

This is essentially the same pressure point I flagged earlier in connection with Hartle and Hawking. As Willem B. Drees observes:

“Hawking and Hartle interpreted their wave function of the universe as giving the probability for the universe to appear from nothing. However, this is not a correct interpretation, since the normalisation presupposes a universe, not nothing.”[vi]

The underlying issue is that probability assignments and normalisation conditions presuppose a domain of possibilities to which they apply. Whatever that domain amounts to, it does not look like sheer nonbeing. When advocates claim quantum principles allow creation from nothing, they’ve already smuggled “something” into the equation: non-material, abstract mathematical laws that somehow exist prior to spacetime, matter, and energy. Ironically, this resonates with the kind of transcendent reality that theism posits.

Consider an analogy from standard QM: when a photon hits the slits in a double-slit experiment, we observe interference patterns that suggest superposition behaviour. The Schrödinger equation doesn’t tell us the photon “really” exists in multiple states; it tells us what detection patterns to expect given our experimental setup. The physical situation constrains what our mathematics can meaningfully describe. In a similar way, the Wheeler–DeWitt formalism can be used to generate a “wave function of the universe” and assign weights to different cosmic configurations, but that presupposes some framework in which such configurations and such weighting are meaningful.

So the most cautious conclusion is not that Vilenkin has explained creation from literal nonexistence, but that he has offered a sophisticated mechanism within quantum cosmology for how a nascent universe might overcome a gravitational energy barrier to facilitate expansion. That may be a genuine explanatory advance. It just does not obviously justify the slogan “from nothing” if the story still relies on pre-existing lawlike structure, even if that structure is abstract rather than material.

Quantum cosmology can feel bewildering, and for good reason: the maths describes scenarios so abstract that even physicists disagree about what they mean. The field is still young and short on definitive answers, so treat bold proclamations and fierce criticisms with a sceptical eye. The good news is that we will now shift to firmer ground, focusing on philosophical ideas that I think are easier to follow.

In The Grand Design, Stephen Hawking makes a striking claim: “Because there is a law such as gravity, the universe can and will create itself from nothing. Spontaneous creation is the reason there is something rather than nothing, why the universe exists, why we exist. It is not necessary to invoke God to light the blue touch paper and set the universe going.” Lawrence M. Krauss echoes this view, influenced by Alexander Vilenkin’s work: “The laws themselves require our universe to come into existence, to develop and evolve.”

When Hawking speaks of “a law such as gravity” he’s invoking the full machinery of quantum cosmology: the universal wave function, the Wheeler-DeWitt equation, and theories of quantum gravity. His underlying assumption treats physical laws as more than mere descriptions—they become explanatory tools that account for why anything exists at all.

This idea carries an ancient echo. When scientists suggest mathematical models can account for the universe’s existence, they’re channelling Plato and Pythagoras, who reasoned that if mathematics describes the effects of various phenomena, perhaps the underlying causes are mathematical too.

So here’s the crucial question: can mathematical models actually cause physical phenomena?

Professor John Lennox offers a helpful distinction between causes and laws. Causes are particular events or conditions that bring about outcomes. Scientific laws, by contrast, summarise stable relationships we observe in nature. Gravity does not “make” an apple fall in the way a hand makes a ball move. Rather, gravitational laws describe how mass and energy behave such that falling occurs.

Consider a simple example from arithmetic. The equation 1 + 1 = 2 is true, but the formula itself creates nothing. If I save $100 this month and another $100 next month, the mathematics confirms I have $200. But if I don’t actually save the money, no amount of mathematical reasoning will grow my bank account. The numbers describe what happens when certain conditions are met; they do not bring those conditions into being.

This distinction matters when we evaluate claims about cosmic origins. It is one thing to say that physical laws illuminate the universe’s structure and help us model how its history unfolds. It is another to say that those laws, or the equations that express them, created matter, energy, and spacetime in the first place. Confusing the two is a bit like claiming that longitude and latitude lines are responsible for the Hawaiian Islands existing. The grid helps you locate and describe the islands, but it does not produce them.

Stephen Hawking famously proposed that “the law of gravity” explains “why there is something rather than nothing.” But that framing risks a category mistake. Laws, stated mathematically, function as descriptions of regularities, not as agents with causal power. Even a complete “theory of everything” or the discovery of some fundamental new law wouldn’t bridge the conceptual gap between absolute nothingness and the emergence of something.

Quantum cosmology can make this tension sharper. Take the universal wave function, often treated as describing a range of possible universes with different geometries and mass-energy configurations, held in a kind of superposition. That is fascinating as physics. Yet a wave function, as a mathematical object, does not by itself explain why one concrete universe is realised rather than another, or why there is any physical reality to realise at all. If we are truly talking about the absence of spacetime, matter, and energy, it is not obvious what physical process could operate to generate them.

The Wheeler–DeWitt equation and the idea of curvature–matter pairings in superspace, may capture something deep about quantum gravity, but they still function as abstract representations. Quantum cosmology can map the landscape of possible worlds with impressive reach, but it still doesn’t settle the question, “Why does anything exist?”

So if mathematical laws do not themselves create the universe, where does their apparent explanatory power come from? Vilenkin wrestled with that question, and at points even entertained the possibility that something like “mind” might sit behind the intelligibility of the whole picture:

“Does this mean that the laws are not mere descriptions of reality and can have an independent existence of their own? In the absence of space, time, and matter, what tablets could they be written upon? The laws are expressed in the form of mathematical equations. If the medium of mathematics is the mind, does this mean that mind should predate the universe?”[vii]

Vilenkin’s question opens up intriguing terrain because it presses on a basic issue: if there were no physical universe at all, in what sense could “laws” be said to exist? One response is to treat mathematics as somehow generative, as if equations themselves could produce a world. But that starts to look less like physics and more like metaphysics, because it attributes causal power to what seem to be abstract descriptions. A more careful way forward is to ask what sort of thing mathematics is, and how it relates to the physical world described by quantum cosmology. At least three broad options are available:

- Mathematics is a post-universal mental phenomenon. It is merely a useful description of reality, which is not fundamentally mathematical.

- Mathematics exists prior to the universe in an abstract, immaterial realm independent of mind.

- Mathematics is a mental phenomenon and exists prior to the universe.

Among the three options, I believe the third makes the most sense based on our uniform experience. This is because:

- The world appears to fundamentally conform to mathematical principles independent of human minds, excluding the first conception. This conception would also rule out the idea under discussion—that mathematical laws cause the universe’s conception.

- There is no logical reason to believe the contents of an abstract realm independent of mind would be accessible to minds. As mathematics is accessible to our minds, this rules out the second conception.

- Mathematics is a mental phenomenon and the universe fundamentally conforms to mathematical principles, so this fits with the third conception.

If mathematical principles exist independent of spacetime, yet mathematics is accessible to minds, not abstract from them, then doesn’t that imply a mind transcending the universe within which mathematical principles hold shape? Unlike a realm of disconnected mindless abstract mathematics, our minds can interact with mathematics because our minds are of the same kind as the mathematics generating Mind.

Of course, here we’re going well beyond the realms of empirical observation and scientific inquiry into deeply speculative philosophy. But the argument holds that a transcendent Mind offers a more likely explanation for the universe than abstract, mindless mathematics floating in some Platonic void.

And to be clear, claiming mathematics is mental doesn’t mean we invent it. The proposal is that an ultimate Mind established these as part of the created order, and our capacity to discover mathematical truths reflects our being fashioned in the image of that source. There’s a resonance between human minds and the “foundational mind” (for want of a better term).

This alignment isn’t random. A cosmos operating on complex mathematical principles and minds capable of comprehending those principles are not coincidental, as it would be if mathematics pre-existed in an abstract, mindless realm. The pairing makes better sense if the universe is the product of an intelligent mind, with our minds naturally attuned to its underlying order.

We also have plenty of experience of ideas originating in the mental realm and, through deliberate effort, producing entities that embody those ideas: plans become buildings, designs become machines. In that light, even if matter and energy could emerge spontaneously, the universe’s remarkable structure and mathematical intelligibility can seem to point beyond brute fact toward something like intelligence as a plausible source of order. General relativity still presses toward a temporal beginning, and while quantum cosmology explores what might underlie that boundary, it can leave conceptual space for a conscious origin rather than closing it off. That is why Vilenkin’s own question is suggestive: if mathematics is the “medium” of mind, the deep fit between mathematics and the cosmos may be read as consistent with a mind “above” the universe.

Between Speculation and Certainty: Stephen Hawking’s Contributions and the Debate on Quantum Cosmology

We’ve covered a lot of ground in this chapter, so let me draw the threads together. Quantum cosmology is an intriguing research program, but it’s also a place where models, assumptions, and philosophical interpretation get tightly intertwined. The key issue, then, is not whether the maths is clever, but what it actually licenses us to say about God and “nothing.”

That’s why it makes sense to end with Stephen Hawking. He was a titan of science and an inspiring symbol of human achievement in the face of adversity. His pioneering work in the 1960s and 1970s set the stage for research areas that continue to flourish today, and he inspired millions, myself included, to delve into the mysteries of the universe. Yet like many brilliant minds, Hawking also had a blind spot. He sometimes spoke with the confidence of settled theory when he was really discussing ambitious, unconfirmed ideas.

You can see that pattern in the way he talked about baby universes, higher dimensions, or a “theory of everything.” These may be fascinating proposals, but they remain proposals. At times, Hawking blurred the line between what had been rigorously established and what was still speculative, especially when the speculation served a larger story about what science could ultimately explain.

That tendency became sharper when he turned to quantum cosmology. Along with physicists like Lawrence Krauss, he saw it as a way to sidestep the theistic implications of the Big Bang. The mathematics, they argued, showed that our universe did not necessarily have a beginning and could have emerged from “nothing.” Quantum cosmology, Hawking claimed, removed the need for a transcendent creator. It was the ultimate scientific answer to the God hypothesis.

But that confidence looks premature once we ask what “nothing” actually means inside these models. Imagine I told you I could build a house from nothing, but I still needed pre-existing building codes, an infinite warehouse stocked with every possible material, and a foundational rule like “Start with the door frame.” Would you believe I had created something from nothing? Or would you recognise that I had simply hidden the starting conditions in the fine print?

Something similar happens in quantum cosmology. When Hawking and James Hartle solved the Wheeler-DeWitt wave equation, they did so by substituting imaginary time into the formula. It’s worth pausing on that detail, because it raises questions about how directly this model describes our actual universe. Their solution also required the intelligent selection of boundary conditions compatible with a universe like ours. As a result, they did not explain the existence of the universe from unaided nothing. They needed a pre-existent something (a formal framework), and they needed intelligence to add information. Moreover, if mathematics is fundamentally a mental reality that seems to exist independently of the physical universe, it may point to a mind beyond the universe.

I think this is what Hawking missed. His earlier work on the Big Bang singularity pressed toward something genuinely unsettling: the possibility that the universe’s existence cannot be exhaustively captured by physical description alone, and may point beyond nature to a non-physical cause. As a theoretical physicist, he naturally wanted a physical explanation he could write down, and quantum cosmology likely seemed to offer a way to keep the discussion within the language of equations. But some questions, I think, resist that kind of treatment. Some answers need to be encountered rather than derived, and that’s not anti-evidence. It is simply a recognition that different kinds of questions require different kinds of understanding.

That is why I doubt the natural sciences will ever answer, “Why is there something rather than nothing?” I’ve read popular science books from scientists I admire that promise exactly that, but I remain sceptical. It’s not even clear the question is scientifically answerable. A genuine “nothing” would contain no equations, no laws, not even quantum fluctuations. Natural science, by its very nature, starts with something. It must. Science is designed to explain how things work and how they came to be as they are, but not why there is anything at all for those explanations to describe. That’s not a failing of science. It’s simply outside science’s domain. This is where the theistic answer enters the conversation. You might dismiss a spiritual proposal as trivial, just another label for our ignorance. But perhaps the more honest approach is to recognise that there is a gap, that it was somehow bridged, and that we’re confronting something genuinely mysteries—something outside the physical domain. If that’s the case, then we should look for the most plausible explanation we can find, even if it takes us beyond the boundaries of natural science.

[i] https://journals.aps.org/prd/abstract/10.1103/PhysRevD.28.2960

[ii] Hawking, S. A Brief History of Time: From the Big Bang to Black Holes (1988). London, UK: Bantam, 140-141.

[iii] A Brief History of Time, 136.

[iv] Hartle, J. “What Is Quantum Cosmology?” Closer to Truth. Retrieved from https://www.youtube.com/watch?v=s6wPcq5yb7s

[v] Vilenkin, A. “Quantum Cosmology”, 7.

[vi] https://link.springer.com/article/10.1007/BF00670817

[vii] Vilenkin, A. ‘Many Worlds in One’, 205.